Trending topics

to blog + X post.

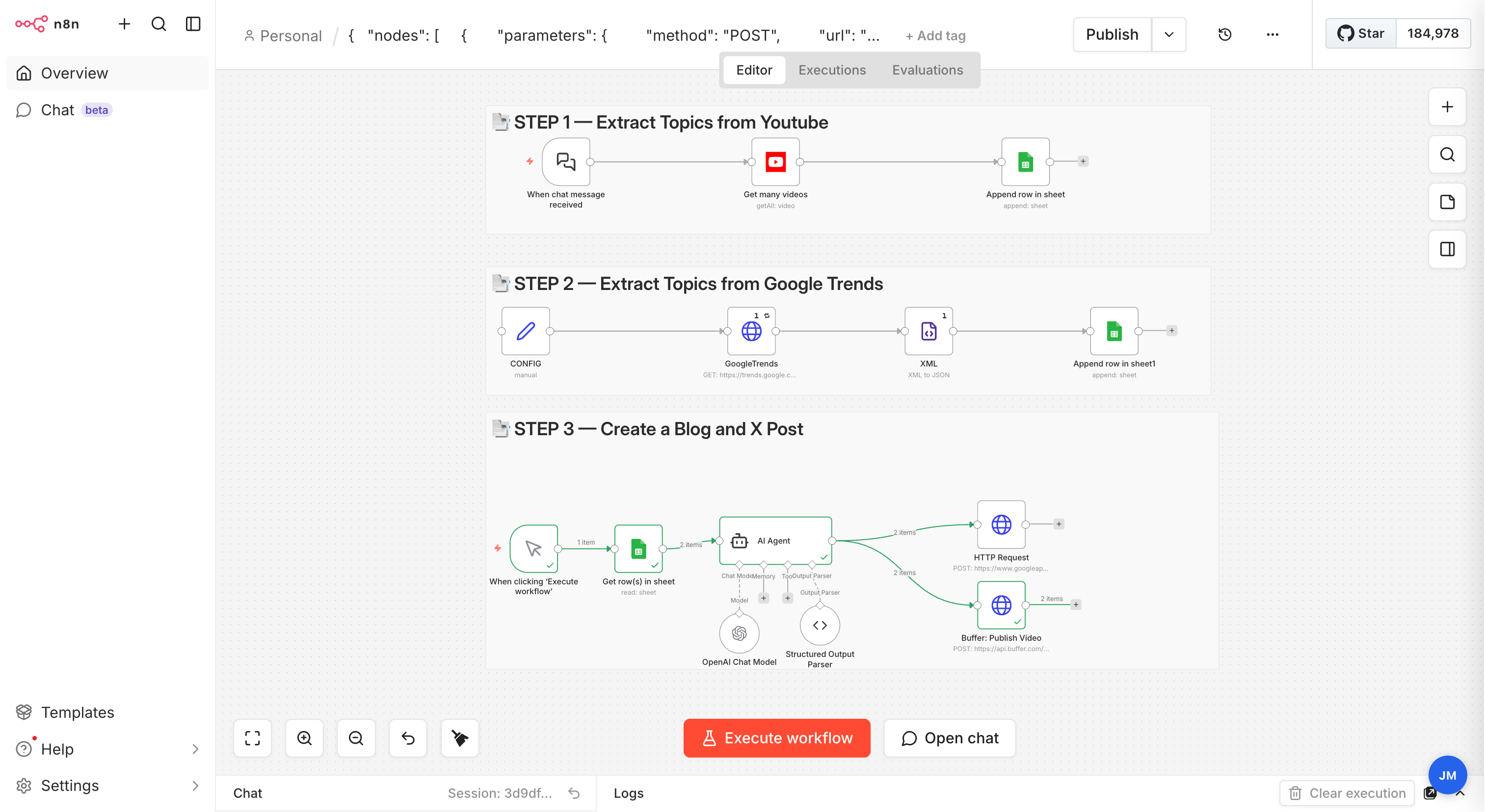

A single n8n workflow that listens for a keyword, pulls the most relevant YouTube videos and Google Trends of the last 48 hours, writes an SEO-ready blog post and a matching X post with GPT, and publishes both automatically through Blogger and Buffer.